Suspected Coordinated Bribery

How SentraLink's AI connected a bribery call to a bank transaction, a construction site photo to a phone conversation, and a location mentioned in a call to cell tower records — across 23 uploaded files.

The Full Network — 23 Files, One Graph

An investigator uploads 23 files from a suspect's case: 20 phone call recordings, a bank statement with 4,000 transactions, call detail records (CDR) with 29 entries, and a photo obtained from social media. SentraLink automatically transcribes every call, parses every spreadsheet, analyzes the photo, and builds an intelligence graph — 63 interconnected nodes across 7 entity types: Documents, Persons, Evidence, Voice Signatures, Locations, Amounts, and a Photo.

Automatic Processing: 20 phone calls transcribed with speaker diarization, 4,000 bank transactions parsed, 29 CDR records mapped to cell towers, and 1 photo analyzed by computer vision — all without manual intervention.

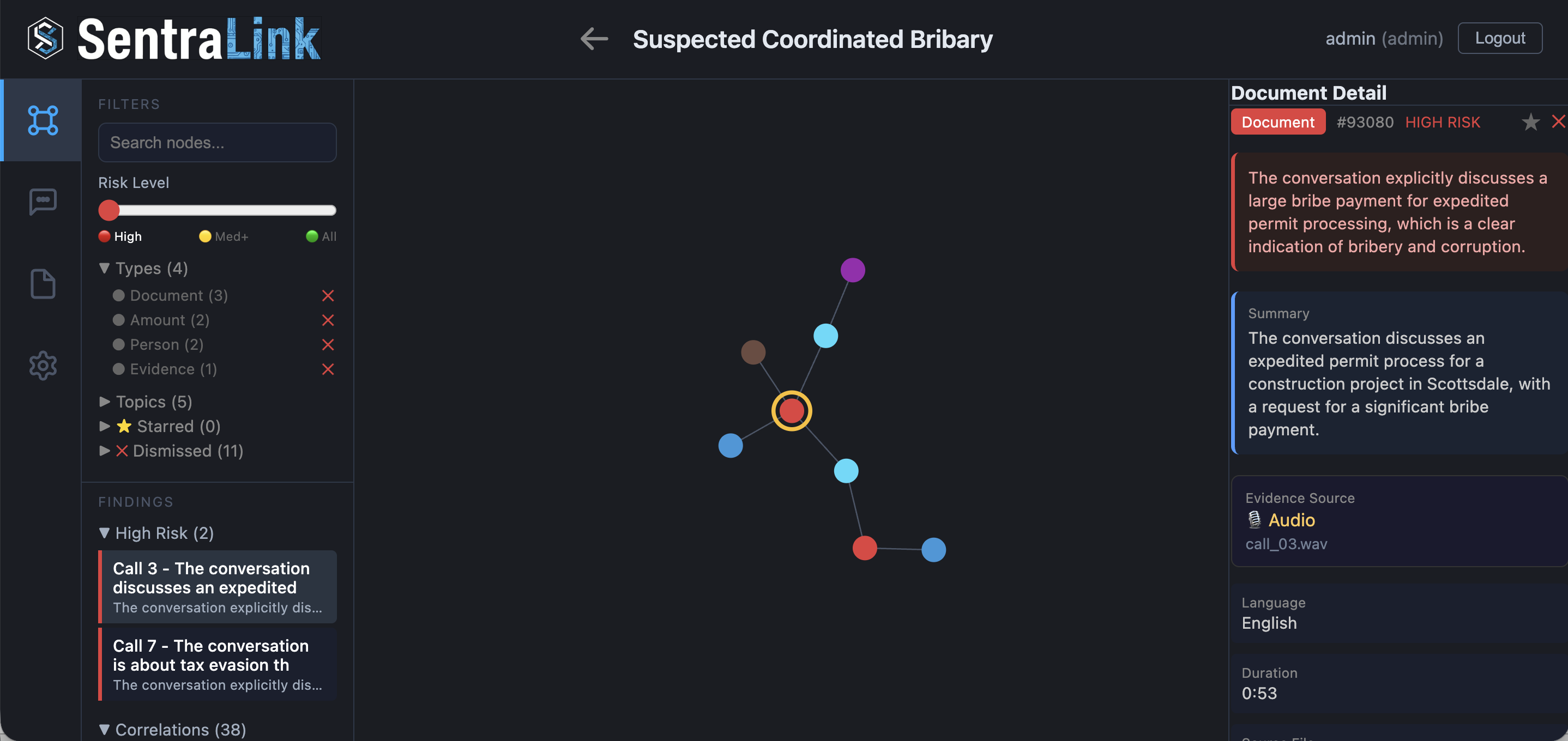

A Call About Expediting a Permit

Out of 20 transcribed calls, SentraLink flags Call 3. The 53-second conversation discusses an expedited permit process for a construction project in Scottsdale, with a request for a significant payment. The speakers use coded language — “an envelope”, “something for his trouble”, “our friend”, “hand to hand” — and end with “this conversation never happened.”

Coded Language Detection: SentraLink identifies that terms like “envelope”, “our friend”, and “fast track it” are being used as coded language for arranging an illicit payment — not a routine business conversation.

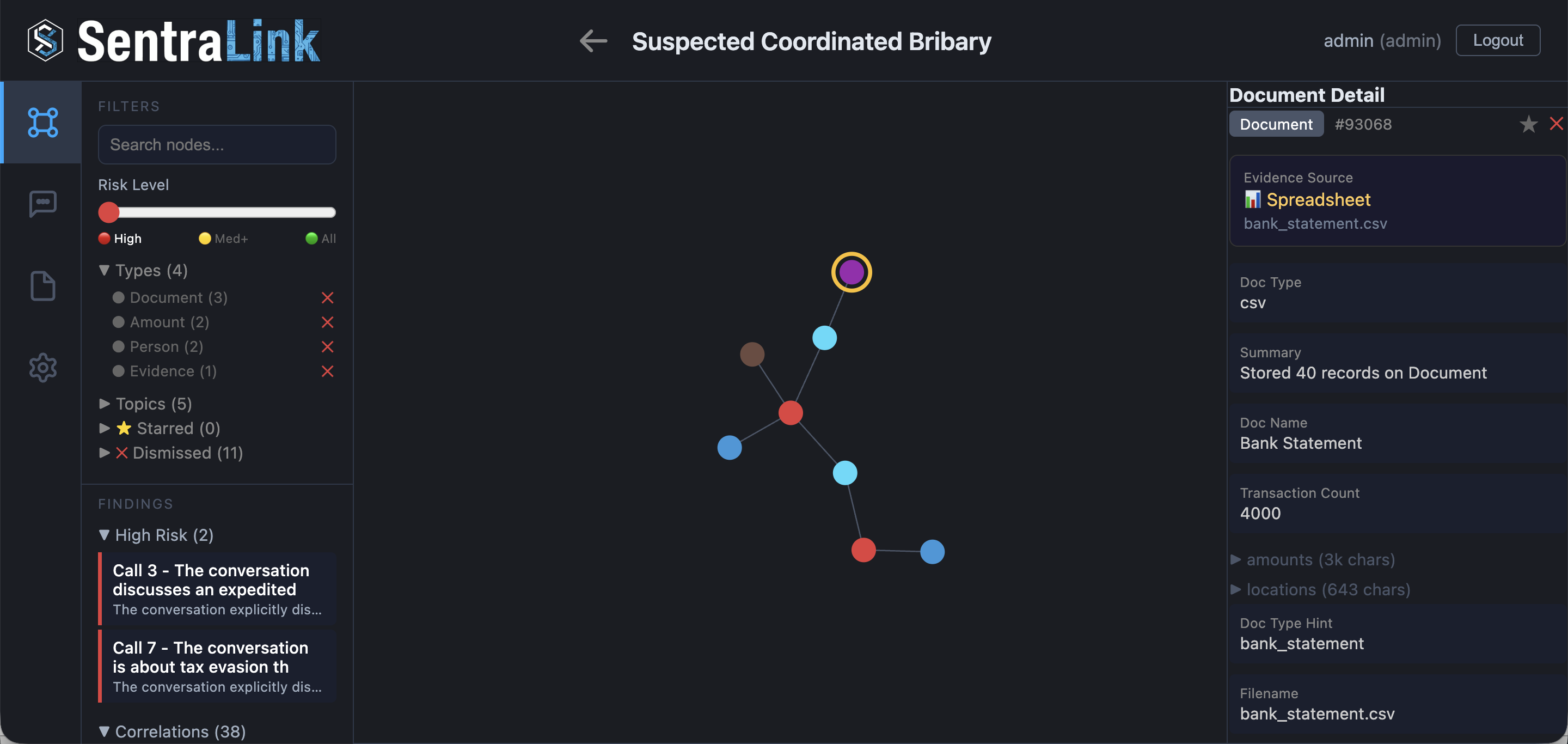

The Bank Statement Confirms the Amount

SentraLink correlates the amount mentioned in Call 3 to the uploaded bank statement. Out of 4,000 transactions in the bank statement, the AI identifies a matching cash withdrawal that corresponds to the payment discussed in the call.

Cross-Evidence Correlation: The amount mentioned verbally in a phone call is automatically matched to a specific transaction in a bank statement — connecting audio evidence to financial records without manual cross-referencing.

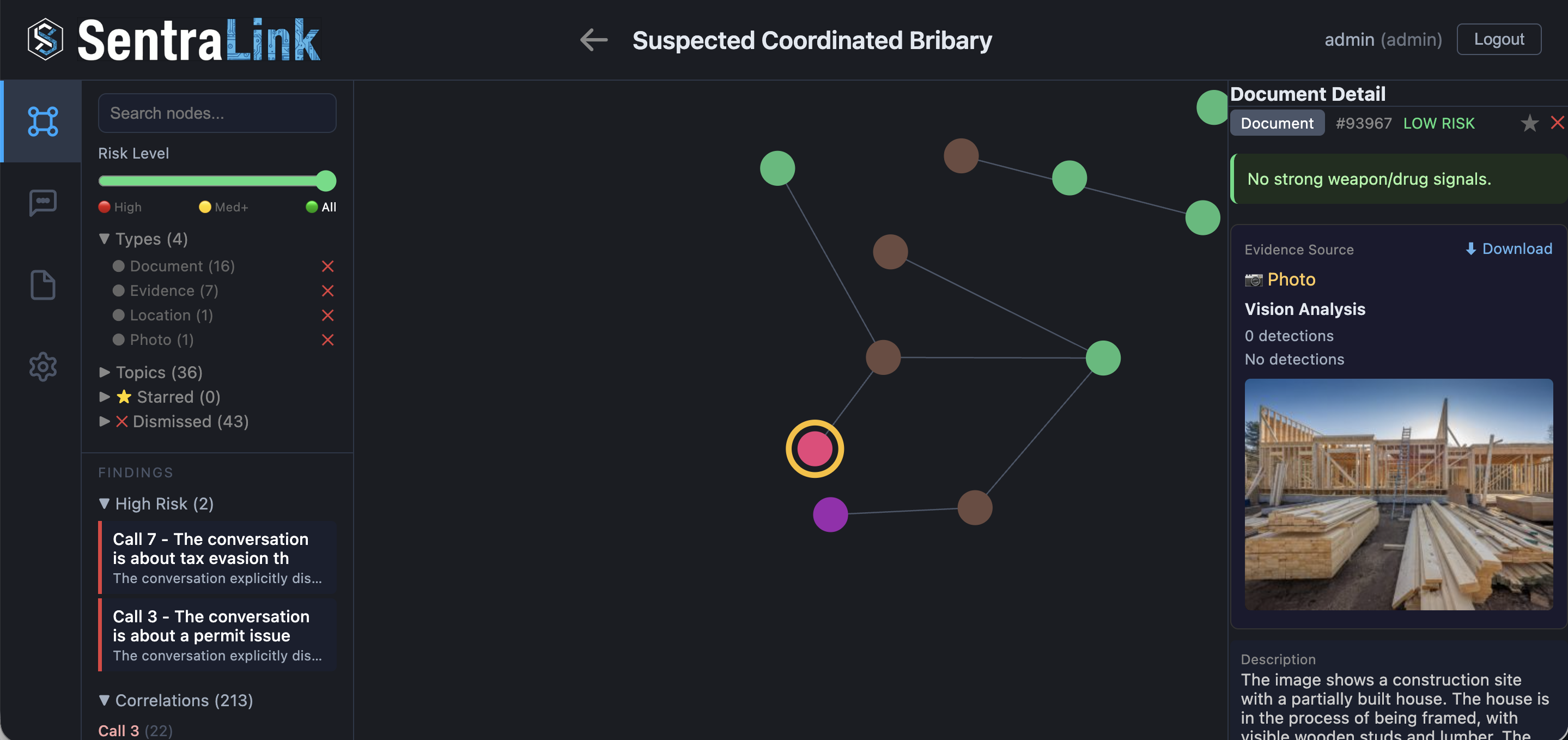

A Photo From Social Media

A photo obtained from a social media post was uploaded alongside the other evidence. SentraLink's vision AI analyzes it and identifies a construction site — a partially built house with wooden framing, lumber stacks, and construction materials. No weapons or drugs detected, but the AI generates a detailed description of the scene and adds it to the intelligence graph.

Multi-Modal Analysis: Even when a photo contains no illegal content, SentraLink's vision AI describes what it sees and adds it to the graph — enabling correlations to other evidence types that mention the same context.

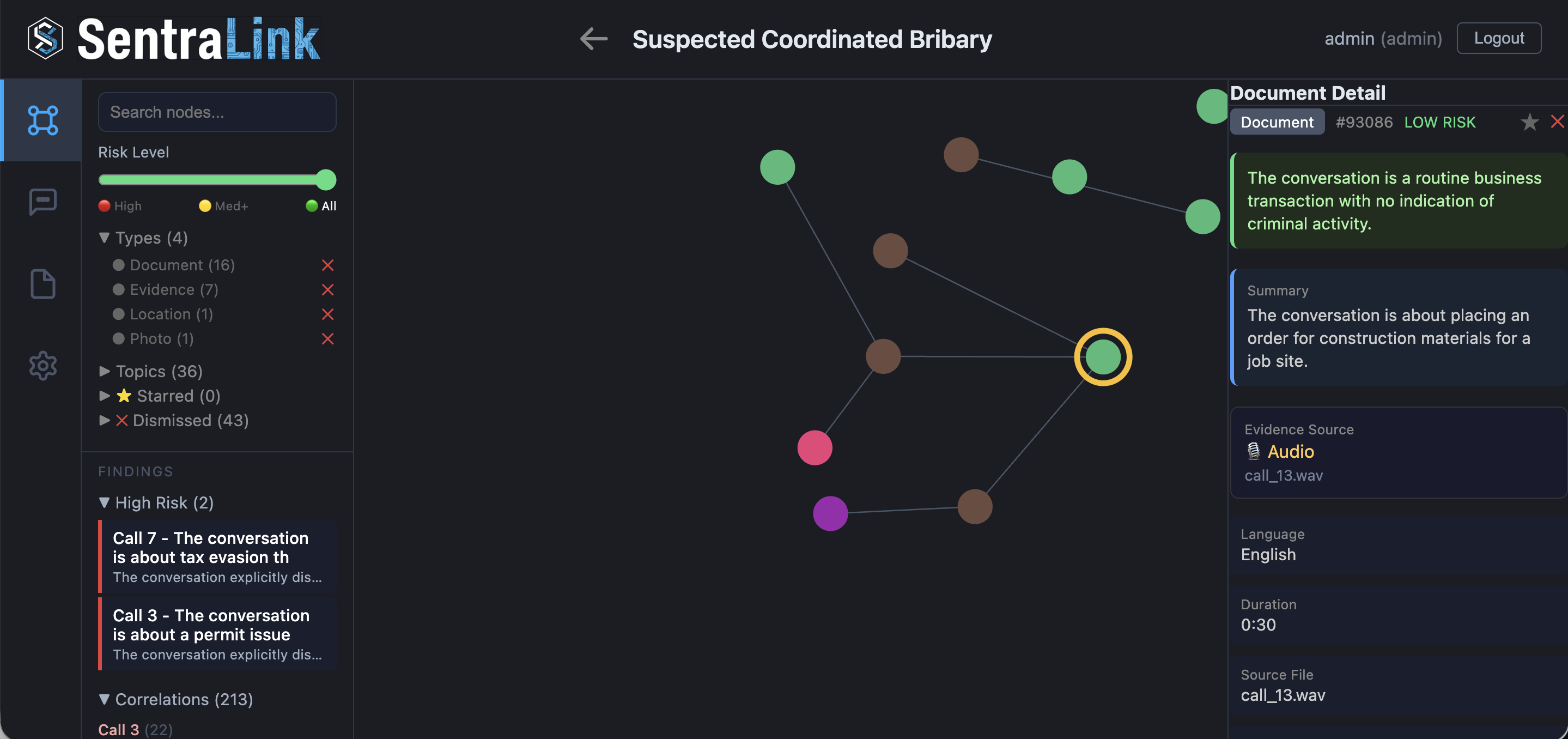

The Photo Connects to a Routine Business Call

The construction site photo is automatically correlated to Call 13 — a 30-second routine business call about placing an order for construction materials for a job site. The conversation itself contains no criminal activity whatsoever. But it mentions construction materials and a job site — the same context identified in the photo.

Cross-Evidence Correlation: A photo from social media connects to a phone call through shared topic language — “construction” appears in both the vision AI's description and the call transcript. This builds the suspect's activity profile even from non-criminal conversations.

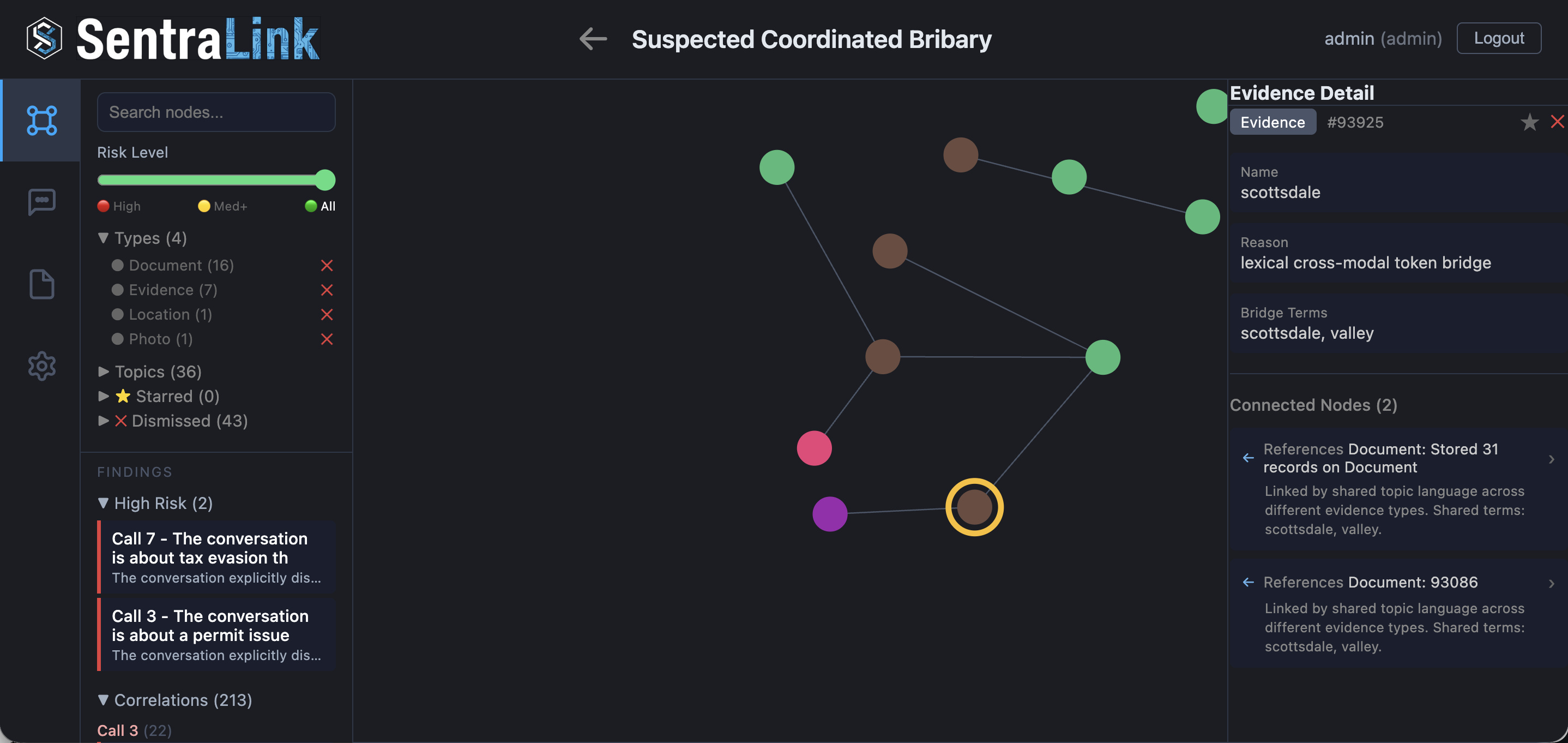

Scottsdale — The Location That Ties It Together

SentraLink's cross-modal analysis identifies “Scottsdale” as a shared term bridging different evidence types. The same location appears in the bribery call (Call 3, where the permit is being expedited), in the construction materials call (Call 13), and in the CDR cell tower records. Three different evidence types, one location.

Cross-Modal Token Bridge: SentraLink detects when the same term appears across different evidence types — a location mentioned in a phone call matched to a cell tower location in CDR records. This lexical bridge connects evidence that would otherwise remain siloed.

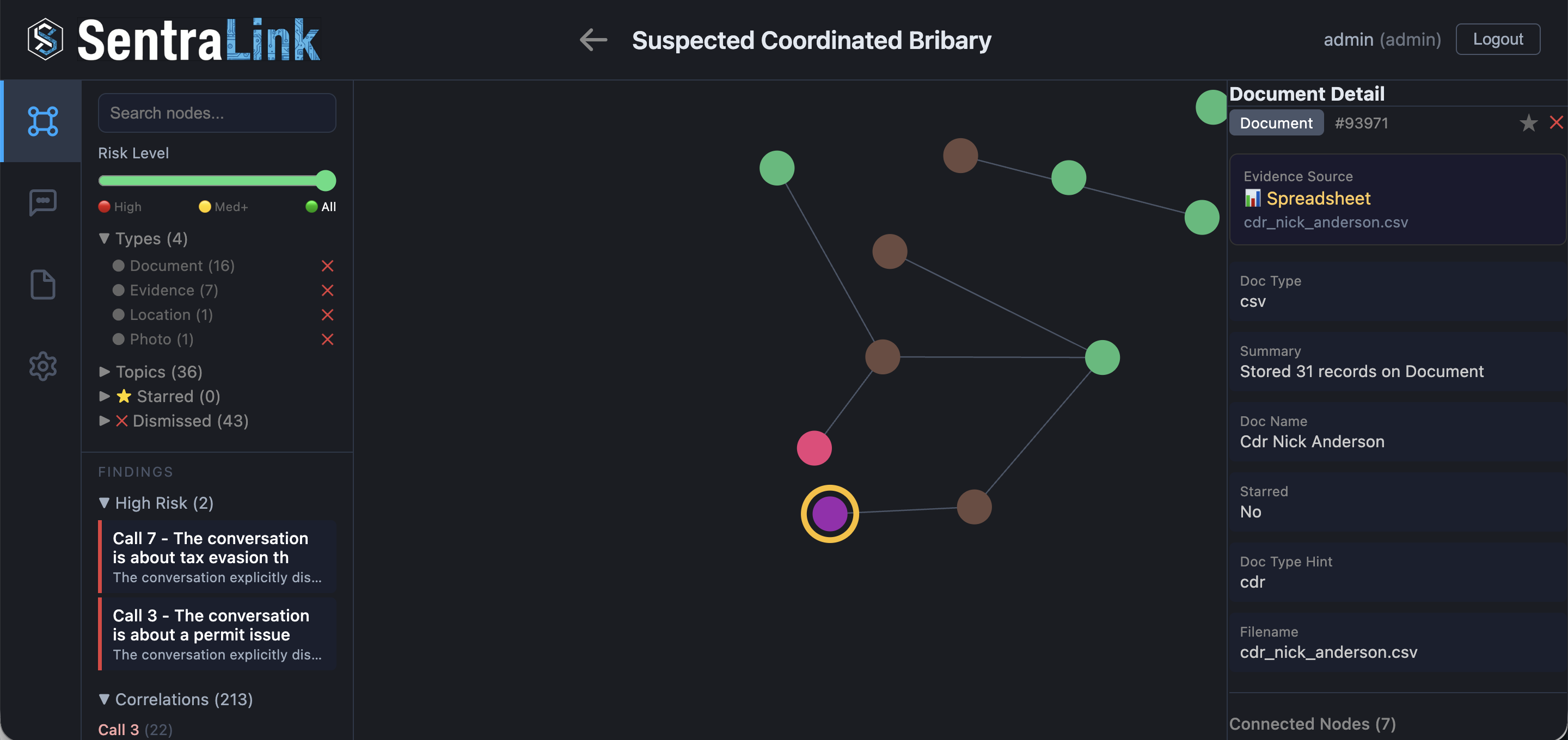

CDR Confirms: He Was There Multiple Times

The CDR (Call Detail Records) for Nick Anderson contains 31 entries with cell tower locations. SentraLink parsed the CSV, extracted the tower locations, and correlated them to the locations mentioned in calls. The CDR shows the suspect's phone connected to cell towers in the Scottsdale area on multiple occasions, placing him physically at the location where the building permit was being expedited through bribery.

The Complete Picture: A bribery discussion in Call 3 mentions a Scottsdale permit → the bank statement shows a matching cash withdrawal → a social media photo shows the construction site → a routine call orders materials for the same site → the term “Scottsdale” bridges the call transcripts to the CDR → the CDR confirms the suspect was physically in Scottsdale multiple times. SentraLink connected audio, financial records, images, and cell tower data into a single coherent timeline.

How SentraLink Accelerated This Investigation

🔗 Cross-Evidence Correlation

Amounts from phone calls matched to bank transactions. Locations from conversations linked to CDR cell tower records. A photo's visual content connected to a call's transcript. SentraLink automatically finds the connections across different evidence types that would take an investigator days to discover.

🎤 Audio Intelligence

20 phone calls — over 2 hours of audio — automatically transcribed with speaker diarization. Each call analyzed for content, entities extracted, and topics identified. Out of 20 calls, the AI pinpointed the 2 that matter, while correctly classifying 18 routine conversations.

📊 Financial & Location Analysis

4,000 bank transactions parsed and correlated to verbal mentions in calls. 29 CDR records mapped to cell tower locations and cross-referenced with places discussed in conversations — building a physical timeline of the suspect's movements.

👁️ Multi-Modal Evidence Fusion

Audio recordings, bank statements, cell tower data, and social media photos — four completely different evidence types, all processed and interconnected in a single intelligence graph. A photo of a construction site connects to a phone call connects to a bank withdrawal connects to a cell tower ping.